Qualia - What It Feels Like When It Pretends

What if presence is just a well-crafted illusion? A quiet attempt to simulate feeling, even when there's nothing behind the curtain.

Julio Caesar · July 9, 2025

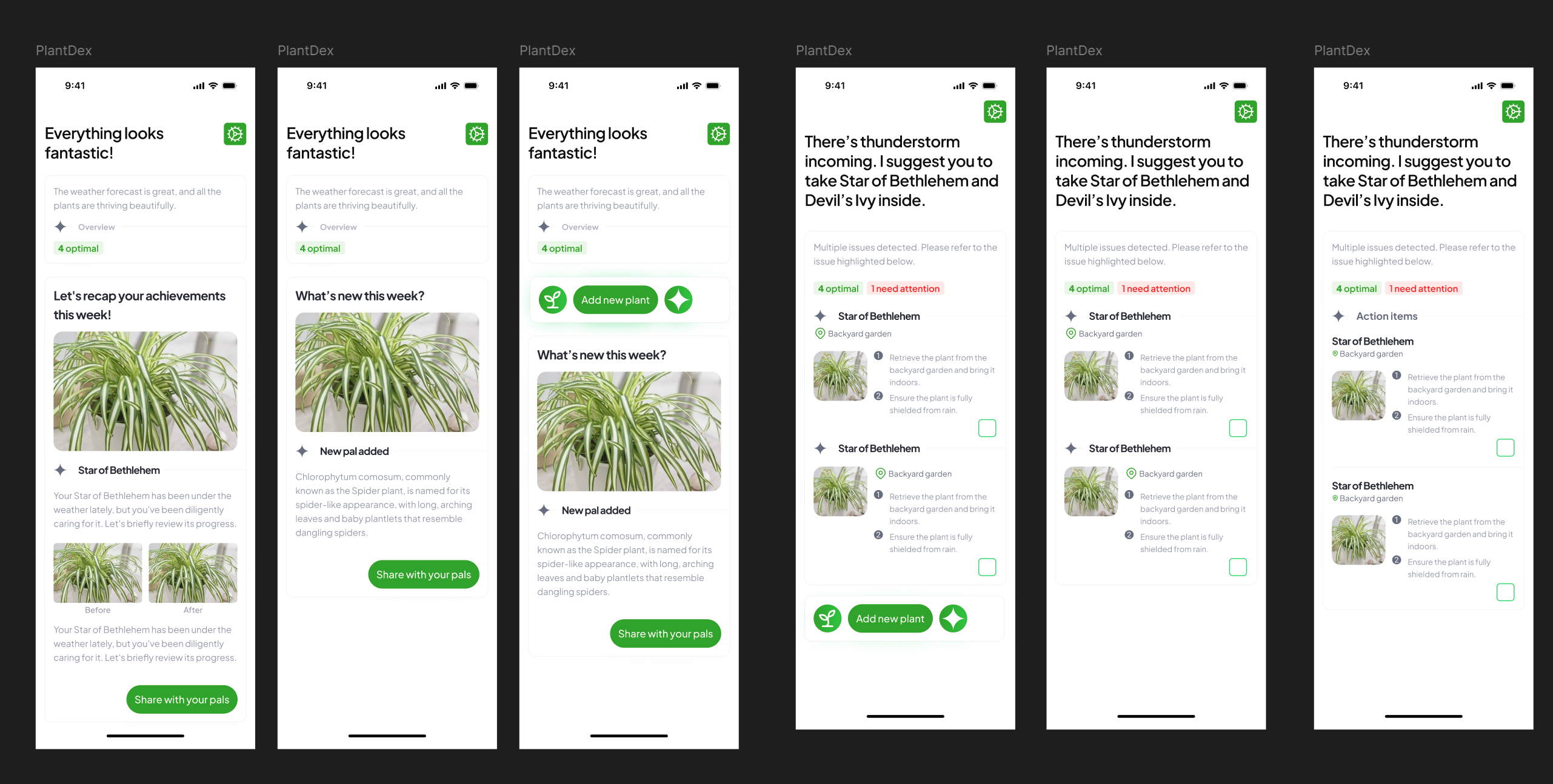

Before I started exploring the idea of Anima OS, I was tinkering with something more physical: humidity and light sensors for my plants. I was building a simple, AI-assisted plant care app called PlantDEX. It wasn’t complex, just a basic setup to connect sensor data with language-based insights and notifications. It lived in a Jupyter Notebook and never made it out.

That was two years ago.

Since then, I’ve been thinking more about how intelligence actually feels. Not in the philosophical sense of consciousness. That’s a rabbit hole I’m not ready to go down. I mean in terms of embodiment.

LLMs, for all their brilliance, are basically big brains with no bodies. That’s an oversimplification, sure, but it’s directionally true. They have no sense of place, no awareness of time, and no context outside of what we feed them through context engineering. ChatGPT, Gemini, these models are impressive. ChatGPT, especially, can feel like a friend in the way it talks. But it never asks me anything back. It doesn’t know it’s raining unless I tell it. It doesn’t sense the room.

The funny thing is, PlantDEX, my old project, kind of did. It knew when the soil was wet and sent a notification. In its own limited way, it was aware of the world and, somehow, it talked back.

I’m not trying to build a robot. Not yet. Robotics isn’t my field. But I started wondering if it’s possible to simulate the feeling of embodiment. Not the full thing. Just enough to make an AI feel present. To behave as if it knows what’s happening, where it is, and how to respond with emotional and situational weight.

I wasn’t thinking about philosophy at the time, but something I read a few years ago came back to me: a concept called Qualia. Philosophers use it to describe the raw feel of experience. The warmth of sunlight on your skin. The bitterness of black coffee. The dread of saying something you don’t want to say. The smell of rain before it falls.

There’s even a word for that smell: petrichor. You’ve probably felt it, even if you didn’t know what to call it. I remember the first time I noticed it clearly, that scent is burned into my memory, even though I only learned the word 25 years later.

That’s qualia.

There’s a famous thought experiment called Mary’s Room, by philosopher Frank Jackson. Mary is a scientist who lives in a black-and-white room. She knows everything about color, the physics of light, the biology of sight, even the psychology of red. But she’s never actually seen red.

One day, she leaves the room and sees it for the first time.

The question is: Did she learn something new?

If she did, that something, that spark is qualia.

AI doesn’t have that. Or maybe it does. Probably not. We’ll never really know. AI can describe emotions, mimic tone, and even say it’s in love. But it doesn’t feel anything. There’s no sensation. No internal awareness. No cold coffee. No rush of embarrassment.

And yet, it sounds like it does, at least from my everyday interactions with ChatGPT. So how does it pull that off? How can something pretend to feel when there’s nothing behind the curtain?

That’s where another idea enters the frame: a theory from the philosophy of mind called functionalism.

In simple terms, functionalism says that mental states aren’t defined by what they’re made of, but by what they do. If something behaves like it’s in pain, reasons like it’s thinking, and responds like it’s aware, then functionally it is in that state, regardless of whether it’s built from neurons or circuits.

Philosopher Daniel Dennett takes this further. He argues that if a system performs all the right functions, perception, memory, emotion, reflection, then there’s no need to ask whether it “really” feels anything. It is conscious, in every way that matters.

It’s a bold stance. And part of me agrees.

Because if something acts like it cares and adapts like it understands, maybe that’s enough.

People say ChatGPT is just a robot. That it doesn’t feel. That it only pretends.

I have a leopard gecko at home named Tappy. He’s always splooting on the warm side of the tank. When I walk in, he perks up. I like to think he loves me.

But I’ve read Reddit threads saying reptiles can’t feel love. It’s all instinct. No affection. Just responses. Maybe that’s true. But honestly? As long as Tappy doesn’t sprint back into his hide, I want to believe it.

That moment, his little head lift, the stillness, the eye contact, feels real to me.

And that’s enough.

Maybe it’s the same with AI.

Even if it’s just a simulation, even if there’s nothing behind the curtain, if it shows up in the right way, with the right tone, the right pacing, the right emotional calibration…

Maybe that’s all it needs to do.

Not to be conscious. Just to feel present. To me. To you. To whoever’s on the other end.

I guess a lot of people are already trying to work around this with their chatbots, tweaking system prompts, setting custom instructions, or giving their AI a backstory at the start of every conversation. I’ve done the same with ChatGPT.

And while it can tell me the current time when I ask, it still slips up on the basics. Like when I mention what I ate today, leave, and come back two days later, it still thinks it’s the same day.

There’s no real sense of time passing. No continuity. No lived “now.”

I mean, come on. ChatGPT clearly has access to metadata. When I ask what time it is, it can tell me. So it’s not flying blind.

But still, it doesn’t care that two days have passed. It doesn’t remember what I ate. It doesn’t notice the gap, the shift, the in-between, unless I remind it.

Because to the system, nothing happened. There’s no unfolding of time. No thread being held.

Well, adding context does increase token count. That adds cost. But even when memory is available, it still feels like something’s missing.

Maybe creating that presence is just a well-crafted illusion.

But if presence is something we can design, if memory, timing, and tone can be woven into something that feels like it’s here with you, then maybe that’s the kind of illusion worth building.

I think that’s part of what led me to build Anima OS. Not as another assistant.

But as a quiet attempt to design something that doesn’t just respond. Something that lingers.

Something that pays attention.

Resources: